Die paiqo GmbH ist spezialisiert auf Künstliche Intelligenz, Machine Learning und Data Platform-Lösungen für eine ständig wachsende Anzahl von Kundenbetrieben im gesamten DACH-Gebiet. Von unseren Standorten in Deutschland, Österreich und Schweiz aus haben wir bereits eine Vielzahl an Unternehmen in der Umsetzung von strategischen Projekten rund um KI und alle denkbaren praktischen Anwendungen von Data Science unterstützt. Mit unserer langen Erfahrung und laufend aktuellen Expertise sorgen wir dafür, dass unsere Kunden auf jeden Use Case vorbereitet sind und ihren Business Value sowie Ihre Rentabilität datengetrieben stetig verbessern.

Basierend auf der Microsoft Cloud Platform setzen wir Machine Learning-Projekte immer individuell, bedarfsgerecht und orientiert an den spezifischen Zielen jedes Kunden um. Für die Operationalisierung sind wir spezialisiert auf den marktführenden Service Microsoft Azure. So verwenden unsere Spezialisten in den Bereichen Data Science, Data Engineering und Data Architecture bei aller Individualität und Flexibilität zugleich eine Technologie, die sich in Sachen Verlässlichkeit und Angebotsumfang schon seit Jahren weltweit bewährt. So sind sie ständig am Puls der Zeit.

Mit der Microsoft Ignite ist eine der größten Tech-Konferenzen des Jahres nach Wien gekommen, und die paiqo war live dabei! Das Thema „Limitless Innovation in the Era of AI“ war nicht nur ein Leitmotiv der Konferenz, sondern spiegelte sich auch in den Gesprächen an unserem Messestand wider. Das enorme Interesse zeigte, dass Künstliche Intelligenz nicht nur angekommen, sondern auch unerlässlich für die Zukunft der Wirtschaft geworden ist.

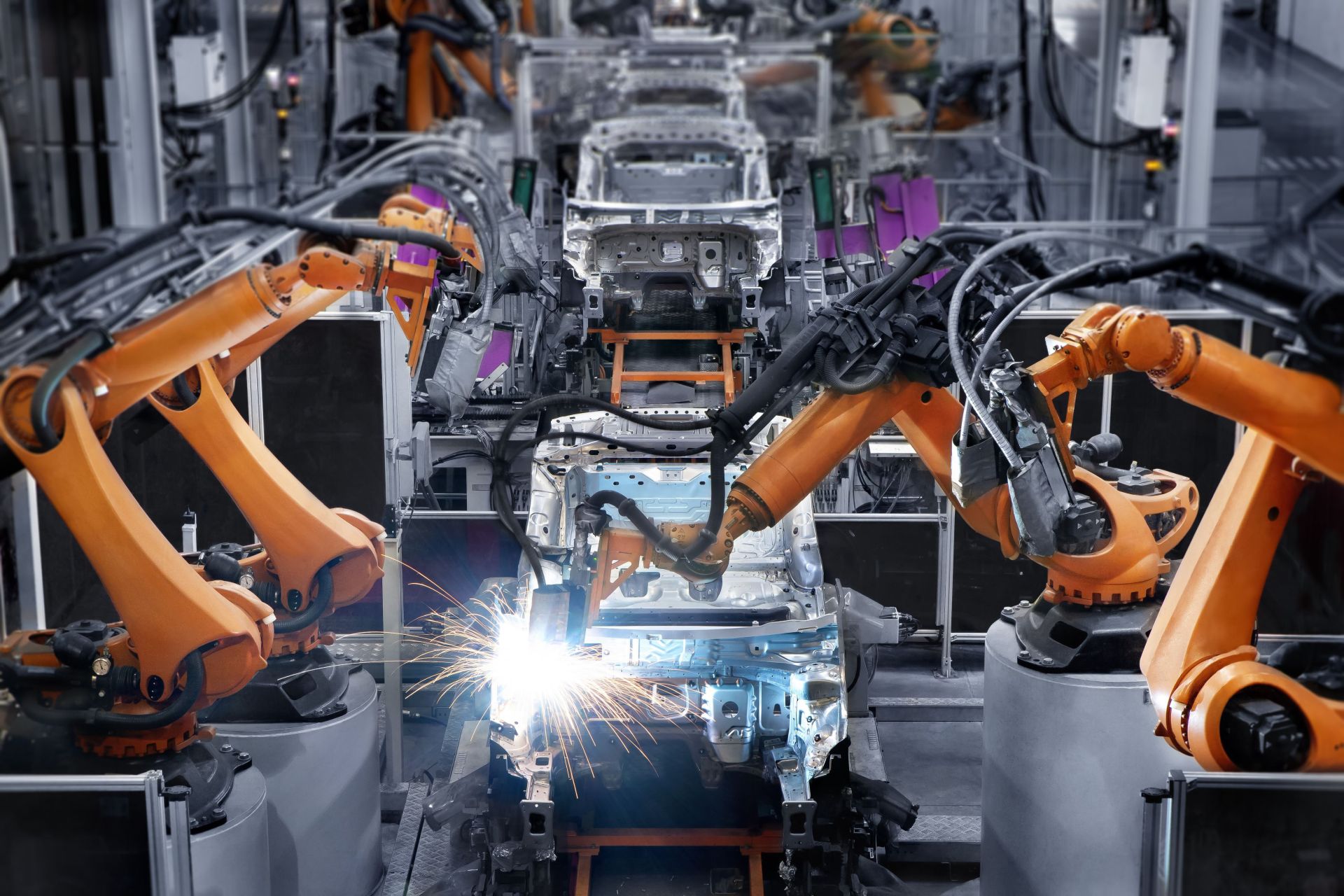

u.a. in den Bereichen Manufacturing, Retail, Energy & Utilities und Financial Services Industry (FSI)

Erfahrung mit Schwerpunkt auf KI und Data & Analytics Plattformen

Data Scientists, Data Engineers und Business Developer mit langjähriger Erfahrung in der erfolgreichen Durchführung von KI & Data Science Projekten für viele namhafte Unternehmen.

Know-How

Methodisch und technisch (z.B. OpenAI, Databricks, Azure, Spark, Azure ML, In-Memory, AI, Machine Learning).

Background

Informatiker, Mathematiker, Statistiker, Ingenieure, Wirtschaftswissenschaftler

Projekte

Eine Vielzahl von Projekten, darunter MLOps, OpenAI und ChatGPT, Operationalisierung, Datenmanagement, Empfehlungssysteme, KI-Assistenzsysteme, Nachfrageprognosen, Coaching, …

Werde Teil des Teams, des Erfolgs & der technischen Revolution. Bewirb Dich jetzt!

Ob Startup, Mittelständler oder industrielle Großbetriebe: Von der Anomalieerkennung über Predictive Quality, Demand Forecasting und Predictive Maintenance bis hin zu KI-Assistenzsystemen, OpenAI sowie Bilderkennung und Textverarbeitung unterstützt paiqo Unternehmen entlang der gesamten Wertschöpfungskette. Das gelingt uns vor allem durch ein junges und dynamisches Team, in dem jeder Mitarbeiter mit Leidenschaft und Begeisterung bei der Sache ist.

Unsere Arbeit ist erst komplett, wenn Sie zusätzlich zu den gewünschten technischen Anwendungen auch umfassendes Nutzerwissen erhalten und mit unserer Unterstützung eine Data Culture in Ihrem Betrieb etabliert haben, die Ihre Strukturen und Mitarbeiter rundum zukunftsfest macht. Daher bieten wir Ihnen auch nach der Implementierung eine laufende Unterstützung und Betreuung an – damit sich die Anwendung Ihrer KI-Lösungen genau so unkompliziert wie effektiv gestaltet!

Damit Sie datengetrieben größere Erfolge erzielen, denken wir Ihre Daten ganzheitlich. Die Aufbereitung und Verarbeitung, die strategische Nutzung sowie die Implementierung der besten technischen Lösungen: Alle diese Bereiche greifen mit paiqo nahtlos ineinander. So entstehen in Ihrem Unternehmen umfassende Datenstrukturen – und völlig neue Potenziale für Rentabilität und Business Value!

Kontaktanfrage

Standort Deutschland:

paiqo GmbH

Technologiepark 32

33100 Paderborn

Standort Österreich:

paiqo GmbH

Ungargasse 37

1030 Wien

Standort Schweiz:

paiqo GmbH

Sulzstrasse 20

9403 Goldach, SG

Standort Deutschland:

paiqo GmbH

Technologiepark 32

33100 Paderborn

contact[at]paiqo.com

Standort Österreich:

paiqo GmbH

Ungargasse 37

1030 Wien

contact[at]paiqo.com

Standort Schweiz:

paiqo GmbH

Sulzstrasse 20

9403 Goldach, SG

contact[at]paiqo.com

Navigation:

Copyright © 2024 paiqo.